zookeeper集群安装与配置

1.下载稳定版本的zookeeper( 下载地址:https://github.com/apache/zookeeper/releases)。

2.将下载好的zookeeper传到linux上(这里使用centos7)。tar zxfv zookeeper-3.4.14.tar.gz -C /usr/local/ 解压到指定位置 (这里使用/usr/local/)。

3.重命名为zookeeper mv zookeeper-3.4.14 zookeeper

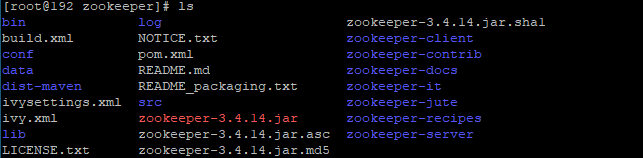

4.取三台机器分别为192.168.118.131,192.168.118.132,192.168.118.133我们看到三台机器解压好的zookeeper目录如下

我们打开conf可以 这个目录一般为zookeeper的配置文件目录。cp

把 zoo_sample.cfg 复制一份重命名为zoo.cfg ,zoo.cfg就是我们需要的配置文件

$ cd conf && ls

configuration.xsl log4j.properties zoo.cfg

zoo_sample.cfg

# The number of milliseconds of each tick

tickTime=2000

# The number of ticks that the initial

# synchronization phase can take

initLimit=10

# The number of ticks that can pass between

# sending a request and getting an acknowledgement

syncLimit=5

# the directory where the snapshot is stored.

# do not use /tmp for storage, /tmp here is just

# example sakes.数据目录

dataDir=/usr/local/zookeeper/data

##### 日志

dataLogDir=/usr/local/zookeeper/log

# the port at which the clients will connect

clientPort=2181

# the maximum number of client connections.

# increase this if you need to handle more clients

#maxClientCnxns=60

#

# Be sure to read the maintenance section of the

# administrator guide before turning on autopurge.

#

# http://zookeeper.apache.org/doc/current/zookeeperAdmin.html#sc_maintenance

#

# The number of snapshots to retain in dataDir

#autopurge.snapRetainCount=3

# Purge task interval in hours

# Set to "0" to disable auto purge feature

#autopurge.purgeInterval=1

# 设置集群相关信息

server.1=192.168.118.131:2888:3888

server.2=192.168.118.132:2888:3888

server.3=192.168.118.133:2888:3888

5.在每台机器的数据目录下创建一个名为myid的文件,内容为表示此机器的标识。 三台机器修改完成后 使用 zkServer.sh start 启动

[root@192 data]# ls

myid

[root@192 data]# vim myid

1

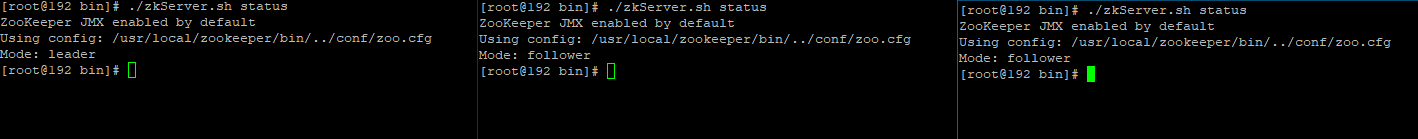

6.通过zkServer.sh status 我们可以看到三台机器的状态 ,有一台为

leader 2台为 follower

7.连接zk zkCli.sh -h 我们看到 我们通过ZooKeeper -server host:port cmd args 方式可以连接到zk中。我们可以 ls命令 看到部分dubbo的数据。

#zkCli.sh -server 192.168.118.131:2181

[zk: 192.168.118.131:2181(CONNECTED) 17] ls /dubbo

[metadata, org.mydubbo.api.DubboApi, org.ztzhang.uumsapi.TokenService, org.dubboapi.DubboApi]

docker 集群部署

首先创建挂载数据目录 日志目录 并在数据目录下创建myid文件并写入对应的id 然后编写配置文件如下 server.{myid的编号}=ip/主机名:通信端口(2888):选举端口(3888) 并挂载docker目录 然后分别启动多个容器

dataDir=/data

dataLogDir=/datalog

#服务器之间或客户端与服务器之间维持心跳的时间间隔 毫秒

tickTime=2000

#初始化时Leader 和 Follower 心跳间隔数 5*2000

initLimit=10

#Leader 和 Follower 之间同步通信的超时时间 2*2000

syncLimit=5

clientPort=2181

autopurge.snapRetainCount=3

autopurge.purgeInterval=0

#最大客户端连接数

maxClientCnxns=60

standaloneEnabled=false

admin.enableServer=true

server.1=zk1:2888:3888

server.2=zk2:2888:3888

server.3=zk3:2888:3888

docker run -d --rm --name zk1 --hostname="zk1" -v /home/docker/zk/config/:/conf/ -v /home/docker/zk/data1:/data -v /home/docker/zk/log1:/datalog -p ::2181 -p ::2888 -p ::3888 --network inet zookeeper:3.9.2\

&&\

docker run -d --rm --name zk2 --hostname="zk2" -v /home/docker/zk/config/:/conf/ -v /home/docker/zk/data2:/data -v /home/docker/zk/log2:/datalog -p ::2181 -p ::2888 -p ::3888 --network inet zookeeper:3.9.2\

&&\

docker run -d --rm --name zk3 --hostname="zk3" -v /home/docker/zk/config/:/conf/ -v /home/docker/zk/data3:/data -v /home/docker/zk/log3:/datalog -p ::2181 -p ::2888 -p ::3888 --network inet zookeeper:3.9.2

requireClientAuthScheme = none

zhangzhitong@zhangzhitong-virtual-machine:/home/docker$ docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

5aa9904ee9cb zookeeper:3.9.2 "/docker-entrypoint.…" 10 seconds ago Up 8 seconds 8080/tcp, 0.0.0.0:32849->2181/tcp, :::32849->2181/tcp, 0.0.0.0:32850->2888/tcp, :::32850->2888/tcp, 0.0.0.0:32851->3888/tcp, :::32851->3888/tcp zk3

e97146b48c51 zookeeper:3.9.2 "/docker-entrypoint.…" 10 seconds ago Up 9 seconds 8080/tcp, 0.0.0.0:32846->2181/tcp, :::32846->2181/tcp, 0.0.0.0:32847->2888/tcp, :::32847->2888/tcp, 0.0.0.0:32848->3888/tcp, :::32848->3888/tcp zk2

f04fd5f8a96b zookeeper:3.9.2 "/docker-entrypoint.…" 11 seconds ago Up 10 seconds 8080/tcp, 0.0.0.0:32843->2181/tcp, :::32843->2181/tcp, 0.0.0.0:32844->2888/tcp, :::32844->2888/tcp, 0.0.0.0:32845->3888/tcp, :::32845->3888/tcp zk1

zhangzhitong@zhangzhitong-virtual-machine:/home/docker$ docker exec f04fd5f8a96b /apache-zookeeper-3.9.2-bin/bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /conf/zoo.cfg

Client port found: 2181. Client address: localhost. Client SSL: false.

Mode: follower

zhangzhitong@zhangzhitong-virtual-machine:/home/docker$ docker exec 5aa9904ee9cb /apache-zookeeper-3.9.2-bin/bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /conf/zoo.cfg

Client port found: 2181. Client address: localhost. Client SSL: false.

Mode: follower

zhangzhitong@zhangzhitong-virtual-machine:/home/docker$ docker exec e97146b48c51 /apache-zookeeper-3.9.2-bin/bin/zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /conf/zoo.cfg

Client port found: 2181. Client address: localhost. Client SSL: false.

Mode: leader

zookeeper数据结构

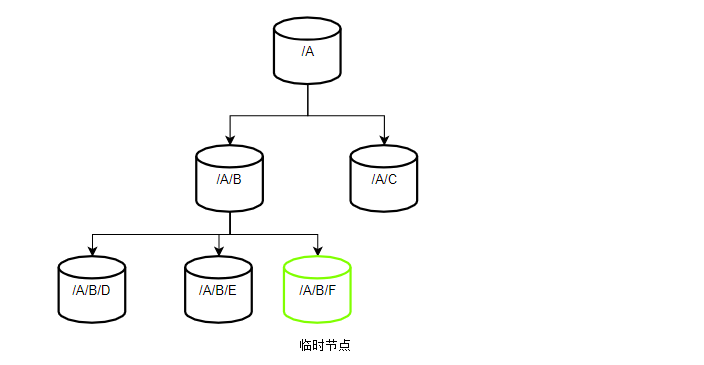

zk的数据结构类似于文件系统,其节点被称为Znode,其类似于/A/S/D/F 这样的路径。zk的每一个znode中都包括节点的数据信息(data),节点的访问权限(ACL),节点的事务版本号等等信息(stat),以及子节点的引用(child),其只适用于少量信息的存储,每个znode数据最大不会超过1Mb, zk的连接为长连接 ,而临时节点则是在连接断开后就会被删除。

docker run -d –restart always –name zk –hostname=”zk” -v /home/docker/zk/zk_data:/data -p 2181:2181 -p ::2888 -p ::3888 –network inet zookeeper:3.9.2